A few years ago, I was working on a platform product that had everything going for it. Strong core technology, enthusiastic early adopters, and a clear market need. We were growing steadily. Then one quarter, growth stalled. Not because we lost customers, but because we stopped winning new ones.

When we dug into the win/loss data, the pattern was clear. Prospects were not choosing us because our product was weak. They were choosing competitors because those competitors had integrations with the tools the prospects already used. Our product was an island. The competitor’s product was a neighborhood.

That was my introduction to ecosystem strategy. The product itself matters, but the network of partners, integrations, and developers around it often matters more.

What Is an Ecosystem Strategy?

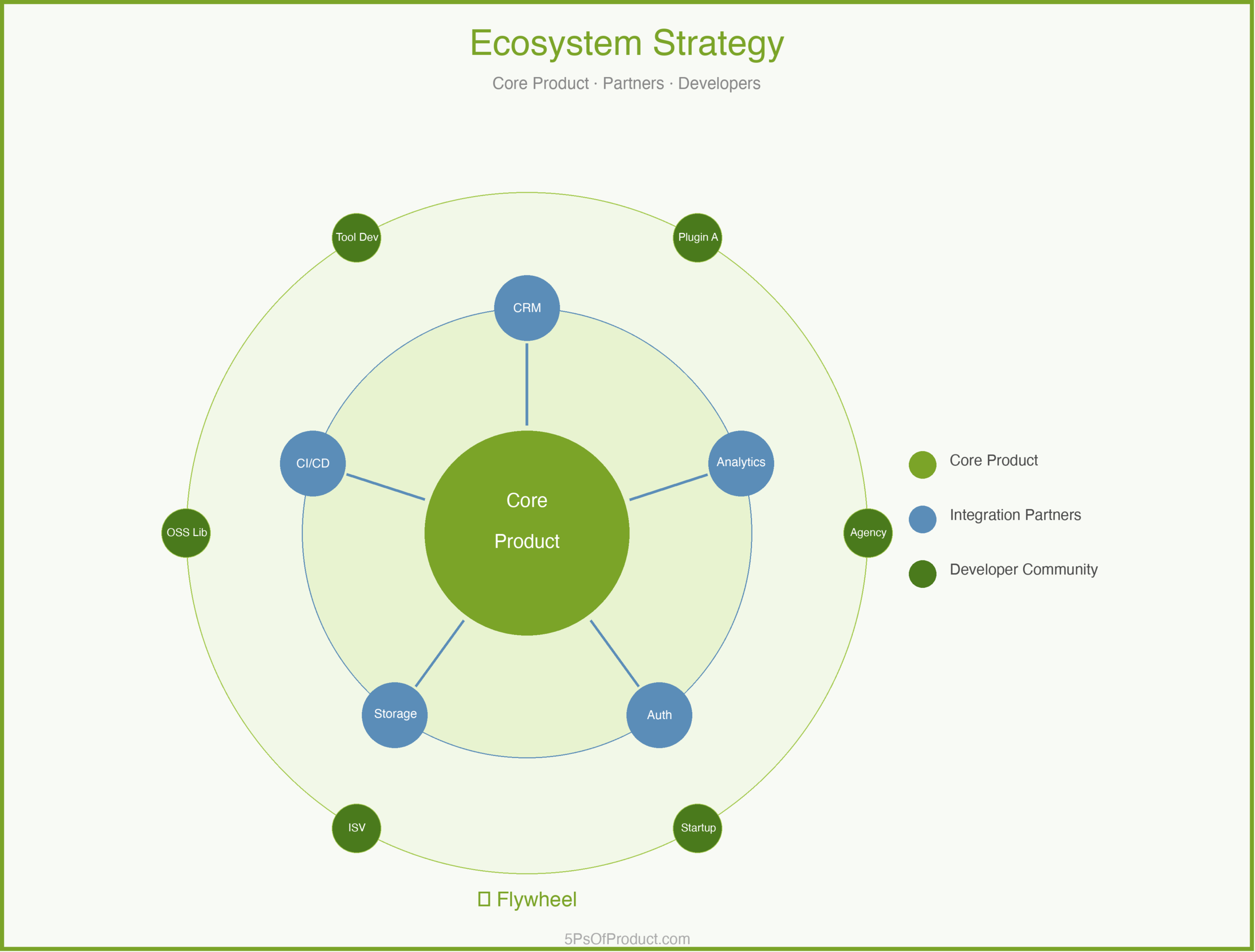

An ecosystem strategy is the deliberate practice of mapping and building the network of partners, developers, integrations, and complementary products that make your platform more valuable. It is the recognition that in a connected world, your product’s value depends partly on what surrounds it.

In the 5Ps framework, ecosystem strategy sits in the Problem phase. Understanding your ecosystem is part of understanding the problem space. Before you can solve a customer’s problem, you need to understand the full context of their workflow — and that workflow almost always extends beyond your product’s boundaries.

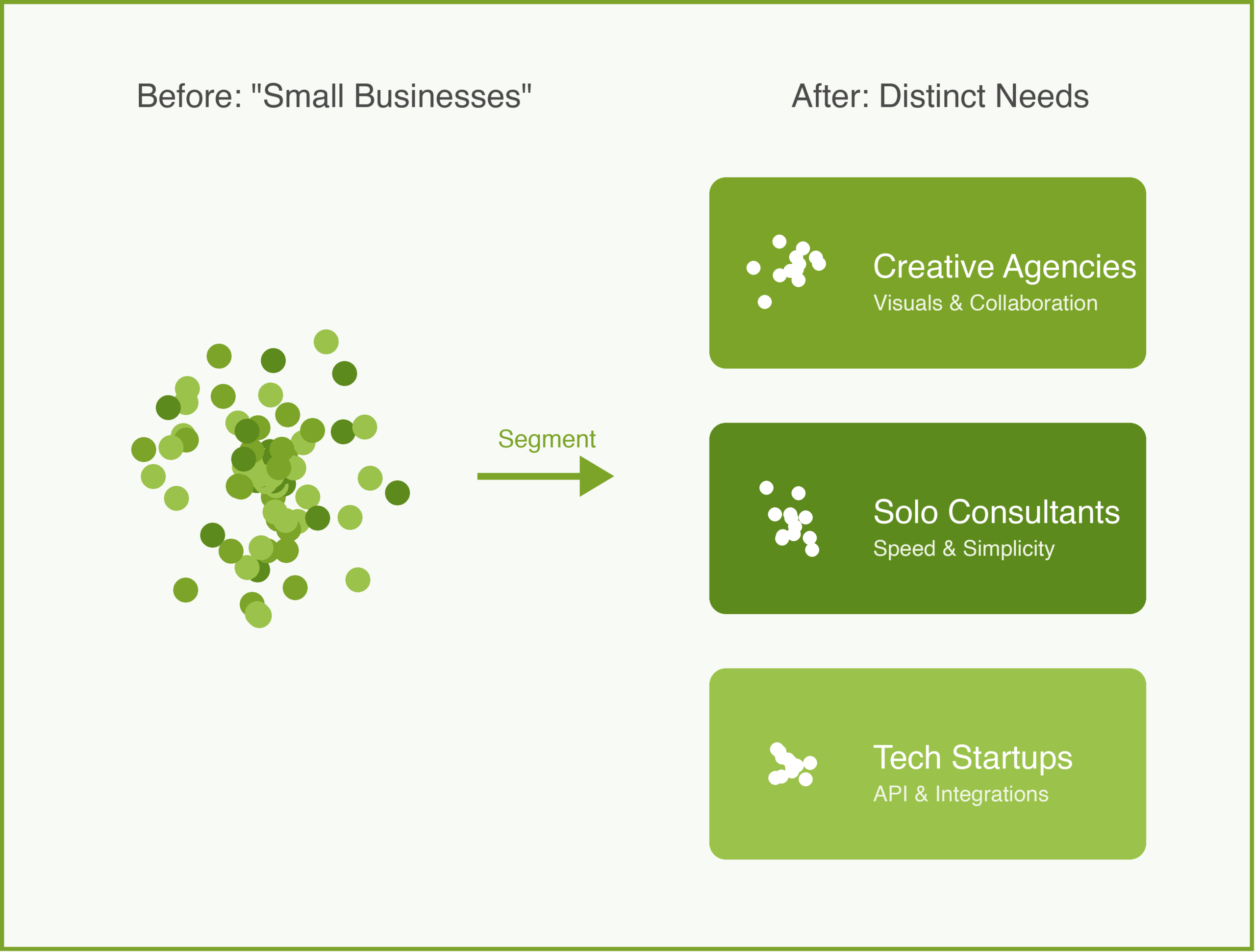

Your customer segmentation tells you who your customers are. Your ecosystem strategy tells you what world those customers live in.

Why Ecosystems Matter

The core product gets you in the door. The ecosystem keeps you in the building.

When a customer evaluates a platform, they are not just evaluating features. They are evaluating whether the platform fits into their existing stack. Can it connect to their CRM? Does it work with their analytics tools? Can their team build custom workflows on top of it?

Geoffrey Moore explores this in Crossing the Chasm, where he describes how the “whole product” extends far beyond what you ship. The core product is necessary but not sufficient. Customers need the surrounding services, integrations, and community to get full value.

This creates a reinforcing cycle. More integrations attract more customers. More customers attract more partners. More partners build more integrations. Once this cycle is spinning, it becomes very difficult for competitors to disrupt.

The Three Layers of an Ecosystem

1. Integration Partners

These are the products your platform connects to. They answer the most basic customer question: “Will this work with what I already have?”

The key decision is whether to build integrations yourself or provide tools for partners to build them. Building yourself is faster but doesn’t scale. Partner-built integrations scale but require investment in documentation, APIs, and support.

2. Solution Partners

These are the consultants, agencies, and system integrators who help customers implement and extend your platform. One good solution partner can bring you dozens of customers because they recommend your product as part of every engagement.

Solution partners need training, certification, and leads. This is a real investment, not just a partner portal and a logo on your website.

3. Developer Community

These are the independent developers and startups who build on top of your platform. A developer community is the most powerful layer because it creates value you could never build yourself. But it is also the hardest to cultivate because developers are skeptical of platforms that might change APIs or terms without warning.

A Concrete Example: CloudMesh

Imagine a cloud infrastructure platform called “CloudMesh” that provides compute, storage, and networking services. CloudMesh has solid technology but limited market share.

CloudMesh’s PM maps the ecosystem across all three layers:

Integration Partners: Customers keep asking for integrations with popular monitoring tools, CI/CD platforms, and identity providers. CloudMesh has built two integrations in-house, but competitors offer fifteen or more. The PM realizes they need an integration marketplace, not more in-house connectors.

Solution Partners: CloudMesh has no formal partner program. A handful of consulting firms have figured out CloudMesh on their own, but they receive no support. The PM launches a lightweight certification program. Within six months, certified partners are generating 30% of new customer referrals.

Developer Community: CloudMesh has good APIs but poor documentation. The PM invests in a documentation overhaul, a sample applications library, and a dedicated developer advocate. Over the following year, third-party plugins grow from 12 to 85.

The ecosystem didn’t replace the core product. It amplified it.

The Ecosystem Flywheel

The real power of an ecosystem is the flywheel effect. More integrations make the platform more attractive to customers. More customers make the platform more attractive to solution partners. More solution partners bring more customers. More customers attract more developers. More developers build more integrations.

The hardest part is getting the flywheel started. In the early days, you need to do things that don’t scale. Build integrations yourself. Recruit partners one by one. Write the documentation personally. Invest in the community before the community invests in you.

Building vs. Buying an Ecosystem

Building is slower but gives you more control. You set the terms, shape the culture, maintain the quality bar. The risk is that it takes years to reach critical mass.

Acquiring is faster but comes with integration debt. You get instant access to a partner network, but those partners have existing expectations. Merging two partner programs is like merging two cultures.

In my experience, the best approach is to build the foundation yourself and grow organically. You cannot shortcut trust.

How to Use With AI

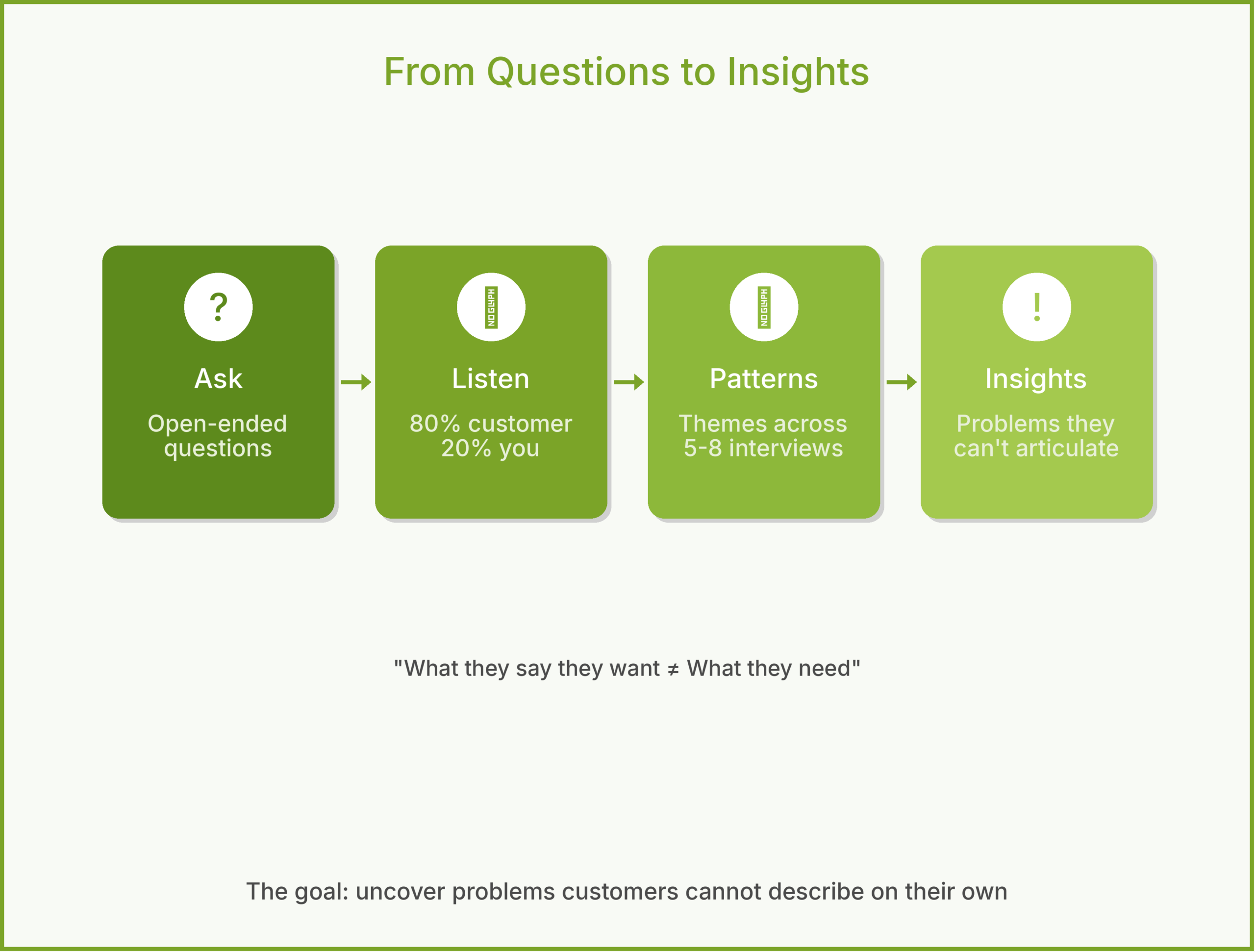

1. The Ecosystem Mapper

Understanding your ecosystem starts with knowing what exists.

Prompt: “Based on this list of customer tech stacks, identify the 5 most common tools that appear alongside our product. For each, describe the integration use case and estimate how many customers would benefit from a native integration.”

2. The Partner Pitch Generator

Recruiting partners requires tailored outreach.

Prompt: “Write a one-paragraph partnership pitch to [Partner Type]. Focus on what they gain: access to our customer base, co-marketing opportunities, and revenue share.”

3. The Documentation Gap Finder

Developer ecosystems live or die on documentation quality.

Prompt: “As a developer trying to build an integration for the first time, what is confusing about this documentation? What is missing? What would make you give up and choose a different platform?”

Guardrail: AI can help identify ecosystem gaps and draft partner communications, but ecosystem relationships are fundamentally human. Use AI for the analysis, but build the relationships yourself.

Conclusion

An ecosystem strategy is not a nice-to-have for platform products. It is the difference between a product that grows linearly and one that grows exponentially. The core product is the engine. The ecosystem is the fuel.

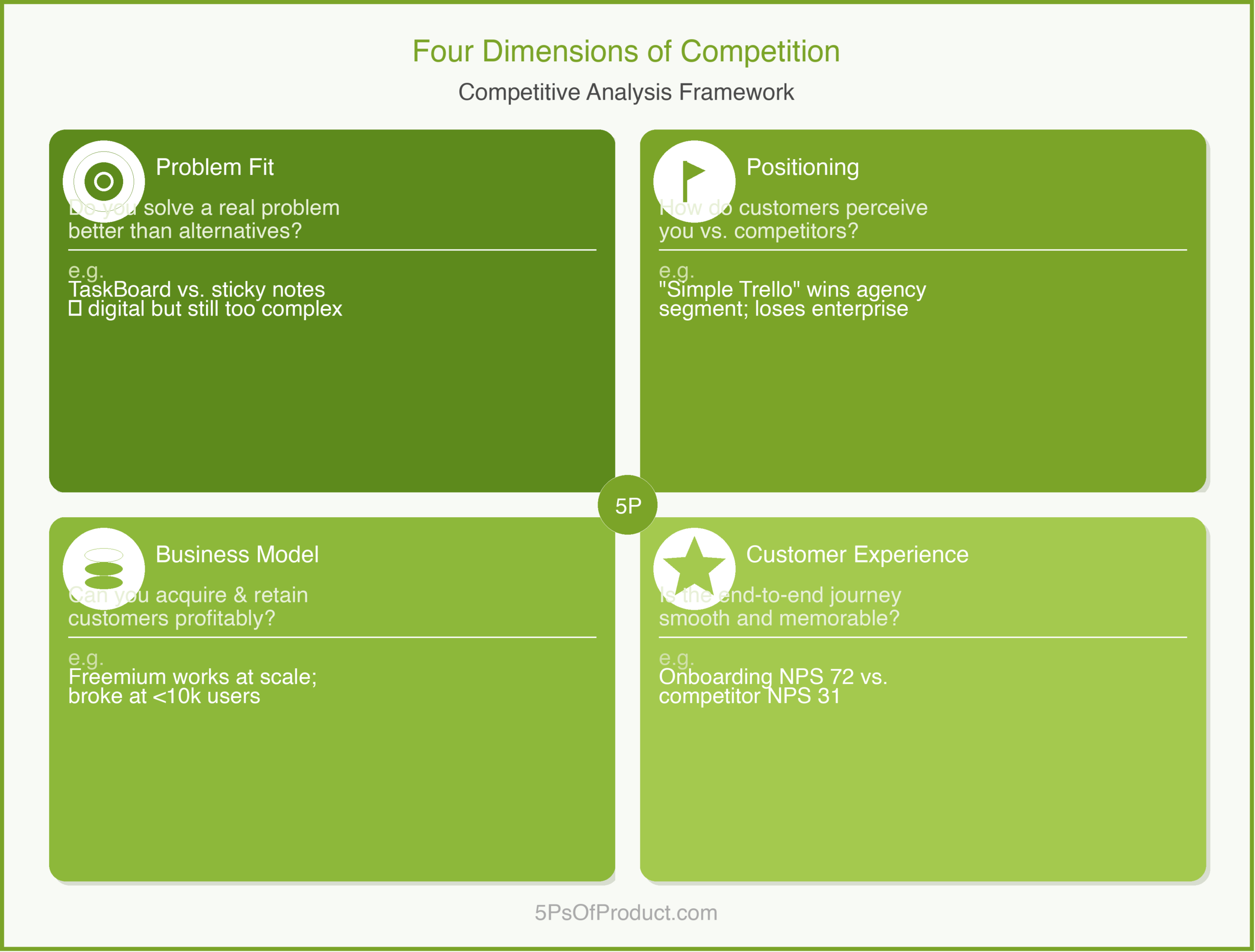

This connects to your competitive analysis. When you map the landscape, look beyond the product. A competitor with a weaker product but a stronger ecosystem will often win. And your go-to-market strategy should include your ecosystem as a central pillar, not an afterthought.

What do you think? Comments are gladly welcome.