Early in my career, I built a feature that 15 customers had asked for. We shipped it. Almost nobody used it. The customers were telling the truth — they did want the feature. But they did not need it. The real problem was something they had not thought to articulate, and I had not thought to ask about.

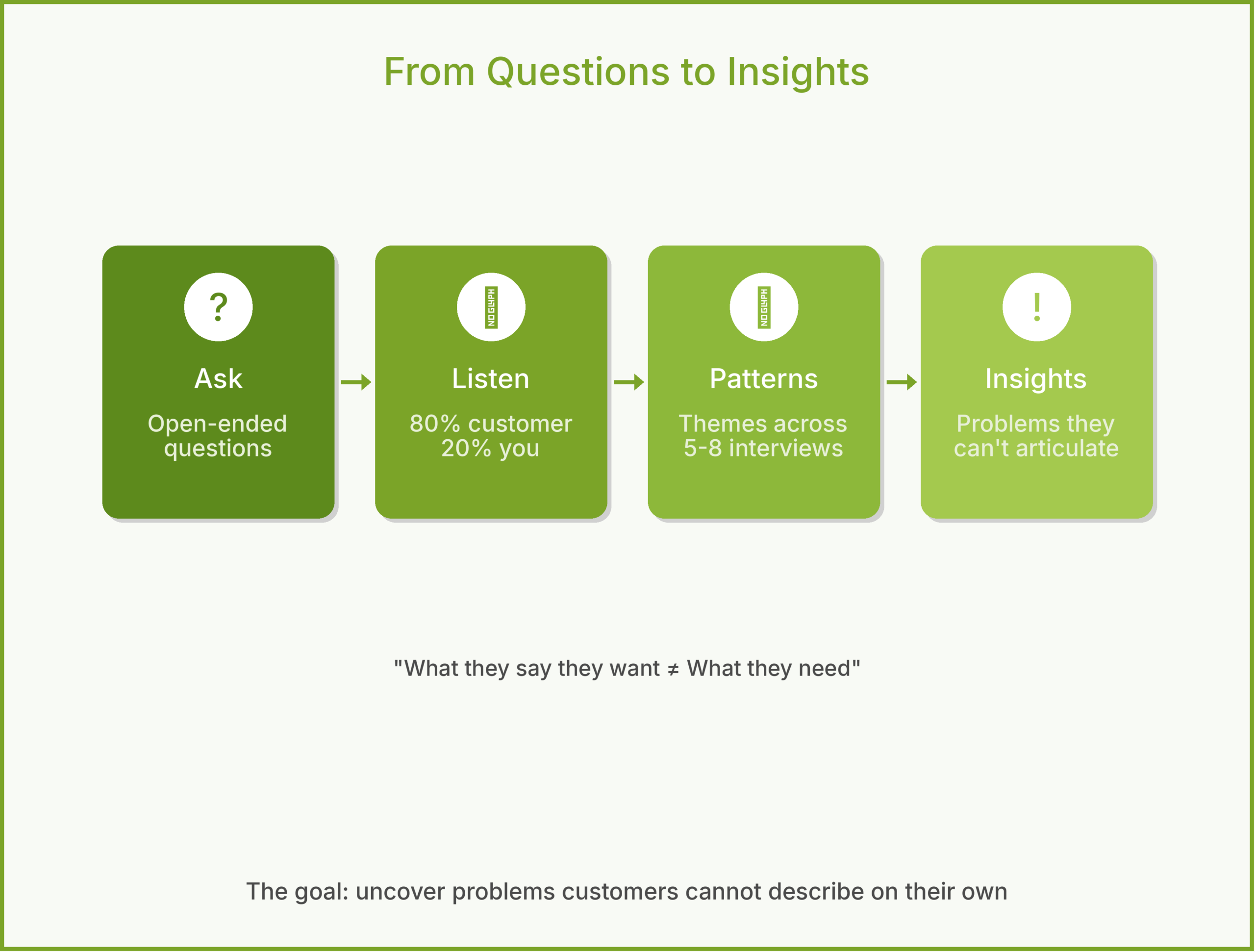

That experience changed how I approach customer research. The goal of a customer interview is not to collect feature requests. It is to uncover problems that customers may not be able to describe on their own.

The Trap: Asking What People Want

When you ask “what features would you like?” you get a list of solutions. People are generally good at describing what annoys them and bad at designing solutions. If you had asked web users in 1995 what they wanted, they would have said “faster loading pages.” The job of the PM is to hear the frustration and trace it back to the root cause.

I call this The Solution Trap — customers describe the fix they have already imagined instead of the pain they actually feel. Your job is to sidestep the trap and get to the real problem underneath.

What Is a Customer Interview?

A customer interview is a structured, one-on-one conversation designed to uncover real problems — not to validate your ideas or collect feature requests. It is different from a usability test (which evaluates a specific interface) or a survey (which quantifies known issues at scale). The interview is exploratory. You are trying to learn something you do not already know.

The best framework I have found for this is Teresa Torres’ continuous discovery approach: interview at least one customer every week, not in big annual batches. Small, frequent conversations keep you grounded in reality as your product evolves.

Planning the Interview

Recruit the right people. Interview current users, churned users, and people who evaluated your product but chose a competitor. Each group tells you something different. Current users reveal usability issues. Churned users reveal unmet needs. Lost deals reveal competitive gaps.

Write a discussion guide. Not a script — a guide. List 8-10 open-ended questions organized by topic. Start broad (“Tell me about the last time you had to…”) and narrow gradually (“Walk me through exactly what happened”). Leave room to follow unexpected threads.

Target 5-8 interviews per round. Jakob Nielsen’s usability research found that 5 participants uncover roughly 80% of usability issues. After 8, you start hearing the same themes. More interviews per round is rarely better than more rounds of interviews over time.

A Sample Discussion Guide

For a fictional company called InsightBoard that builds a PM analytics dashboard:

- “Tell me about your typical Monday morning. How do you figure out what to focus on this week?”

- “Walk me through the last time you needed to make a data-driven product decision. What did you do?”

- “Where did you go for the data? How long did it take?”

- “What was frustrating about that process?”

- “If that frustration disappeared tomorrow, what would be different about your work?”

- “Tell me about a time you made a product decision you later regretted. What information would have changed that?”

- “How do you share product data with your team or stakeholders today?”

Notice: no question mentions InsightBoard or asks about features. Every question is about the customer’s life, their workflow, and their frustrations.

During the Interview

Listen more than you talk. A good ratio is 80% customer, 20% you. Your job is to ask the question and then get out of the way. The instinct to explain your product or defend your choices is strong. Resist it.

Follow the energy. When a customer’s voice changes — they get animated, frustrated, or suddenly quiet — that is a signal. Dig deeper. “You mentioned that was frustrating. Tell me more about that.”

Ask for specifics. “Usually” and “sometimes” hide the truth. Push for: “Can you tell me about the most recent time that happened? Walk me through it step by step.” Specific stories reveal more than generalizations.

Avoid leading questions. “Don’t you think it would be helpful if…?” is not a question. It is a pitch. Same with “Would you use a feature that…?” The answer is always yes, and it means nothing.

An Example: InsightBoard

InsightBoard’s team interviews 6 product managers at mid-size SaaS (software-as-a-service) companies. They expect to hear about dashboard features — better charts, more integrations, real-time data.

Instead, three themes emerge. First, PMs are spending 3-4 hours per week assembling data from different tools before they can analyze anything. Second, when they share data with executives, they spend more time explaining the methodology than discussing the insights. Third, PMs do not trust their own data — they worry about conflicting numbers across tools.

The original product idea was “a better analytics dashboard.” After interviews, the product vision shifts to “eliminate the 4 hours PMs spend assembling data before they can think.” That is a very different product, and a much more valuable one.

Common Mistakes

Confirmation bias. You already believe your product solves a problem, so you unconsciously steer conversations toward confirming that belief. The fix: have someone outside the product team review your discussion guide for leading questions.

Interviewing only fans. Happy customers are easy to recruit and pleasant to talk to. But they will not tell you what needs fixing. Make churned users and lost deals at least 30% of your interview pool.

Taking notes, not synthesizing. Raw interview notes are useless without synthesis. After each round, look for patterns across interviews. What themes appeared in 3 or more conversations? Where did customers disagree? The synthesis is where insights live.

How to Use With AI

AI tools can accelerate interview synthesis without replacing the interviews themselves.

Build your discussion guide. Describe your product and target customer to Claude or ChatGPT. Ask it to generate 10 open-ended, non-leading interview questions. Then edit them — the AI will get you 70% of the way, and your product knowledge fills the rest.

Synthesize themes. After a round of interviews, paste your anonymized notes into an AI and ask: “What are the top 5 themes across these interviews? Where do participants agree? Where do they disagree? What surprised you?” The AI is good at pattern-matching across large volumes of qualitative data.

The guardrail: never let AI replace the interview itself. The whole point is hearing real humans describe real problems in their own words. AI can help you prepare and synthesize. It cannot sit across from a customer and notice when their voice changes.

Why This Matters

Customer interviews are the foundation of the Problem phase in the 5Ps framework. Get them right and every subsequent decision — what to build, how to price it, how to market it — becomes clearer. Get them wrong and you spend months building something elegant that nobody needs.

The best interview I ever conducted lasted 22 minutes. The customer said one sentence that reframed our entire product strategy. You cannot get that from a survey. And you cannot get it from an AI. Some insights only come from sitting across from a real person and listening.

What do you think? I would love to hear your interview techniques. Comments are gladly welcome.