Early in my career, I watched a team spend six weeks building what they called a “comprehensive benchmark suite.” They tested their product against three competitors, generated impressive charts, and published the results on their blog. Within a week, two of the three competitors published rebuttals that poked holes in the methodology. One pointed out the tests used unrealistically small datasets. Another showed that the default configuration had been changed for the competitor but not for the benchmarking team’s own product. The blog post came down quietly, and the team’s credibility took a hit.

That experience taught me something I have carried through every product role since: a benchmark that cannot withstand scrutiny is worse than no benchmark at all. Credible benchmarking is not about proving you are the best. It is about giving customers the evidence they need to make an informed decision.

What Is Product Benchmarking?

Product benchmarking is the practice of measuring and comparing your product’s performance against alternatives using reproducible, transparent methods. It applies to any product where measurable performance matters — from throughput and latency to resource efficiency and accuracy.

In the 5Ps framework, benchmarking lives in the Promotion phase. It is one of the most powerful tools for translating technical superiority into market credibility. But it sits downstream of your competitive analysis, because you need to understand the landscape before you decide what to measure.

Done well, benchmarking tells a story backed by data. Done poorly, it tells the market you are willing to cherry-pick results.

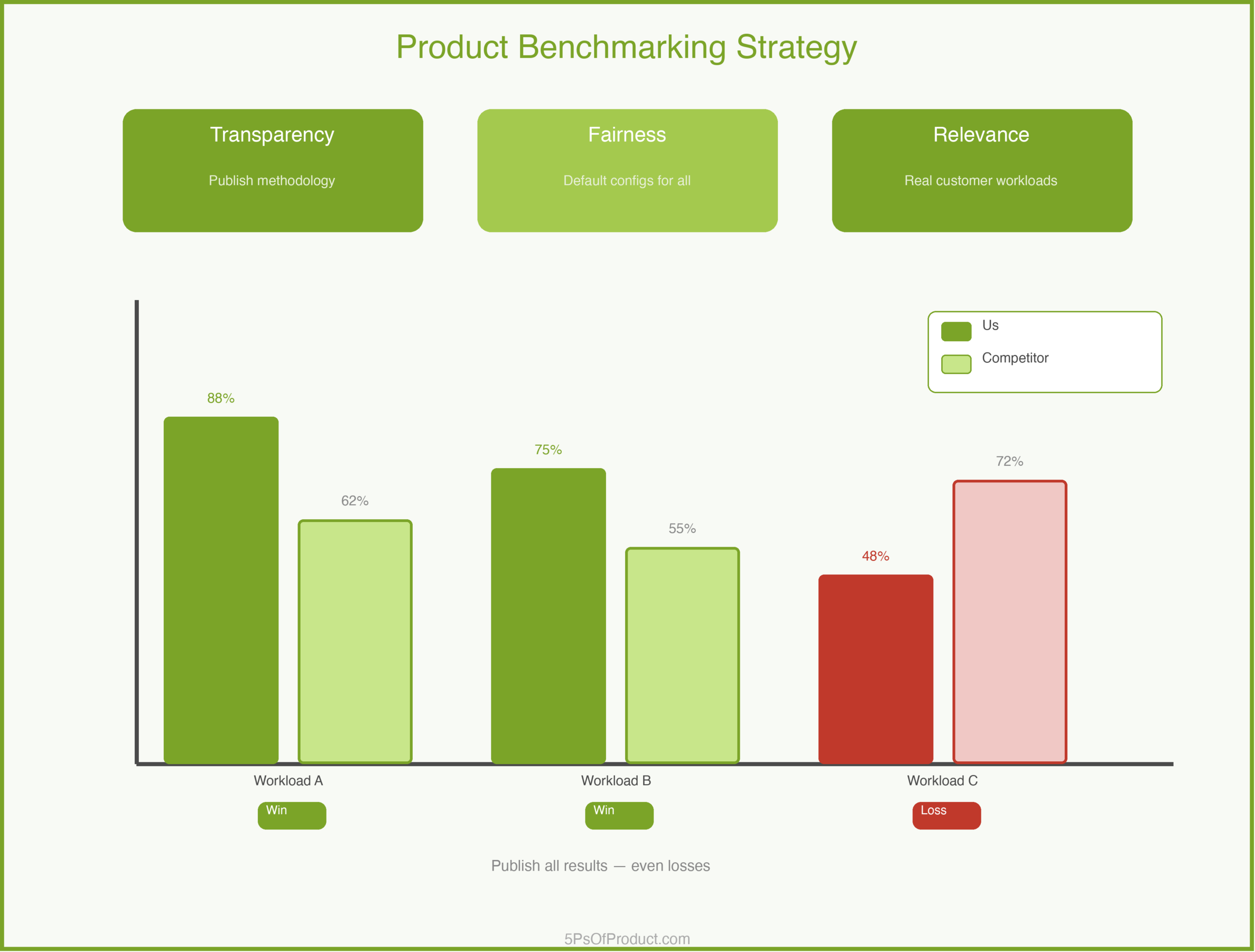

The Three Pillars of Credible Benchmarks

1. Transparency

Publish your methodology. All of it. What hardware did you use? What software versions? What configuration settings? What dataset? If someone cannot reproduce your results from the information you provide, your benchmark is an opinion, not evidence.

The bar I set is simple: could a skeptical competitor reproduce these results? If the answer is no, you are not done.

2. Fairness

Use default configurations for every product, including your own. Test the same workload across all products. If you tune your product and leave competitors on defaults, someone will notice, and the resulting backlash will overshadow any performance advantage you actually have.

3. Relevance

Measure what customers actually care about. A benchmark showing you are 3x faster on a workload nobody runs is trivia. Talk to your sales team and your customers. What performance questions come up in evaluations? Those are your benchmark scenarios.

A Concrete Example: QueryVault

Imagine a company called “QueryVault” that builds a high-performance database. They compete in a crowded market where every vendor claims to be the fastest.

QueryVault’s PM decides to build a benchmarking program:

Define the workloads. Instead of inventing artificial tests, QueryVault talks to 15 customers about their actual usage patterns. Three workloads emerge as representative: high-volume writes, complex analytical queries, and mixed workloads.

Establish the rules. They publish a methodology document alongside results. Every product runs with default configurations. Test infrastructure is identical. Test scripts go in a public repository.

Run the tests honestly. QueryVault wins on two of three workloads. On mixed workloads, a competitor edges them out. The PM’s first instinct is to exclude that test. Instead, they publish all three results and add context explaining why mixed workloads are challenging for their architecture.

That honesty becomes their strongest marketing asset. Customers trust a vendor who admits a weakness far more than one who claims to be the best at everything. As Teresa Torres describes in Continuous Discovery Habits, building trust with your audience requires showing your work, not just your wins.

Designing the Benchmark Program

A single benchmark report is a snapshot. A benchmarking program is a strategic asset.

Cadence. Run benchmarks quarterly. This builds a track record and lets you show improvement over time.

Versioning. Always test against the latest generally available version of each product. Old versions are easy targets but undermine credibility.

Independence. If you can afford it, have a third party validate your benchmarks. But even without third-party validation, publishing your methodology goes a long way.

Common Benchmarking Mistakes

Cherry-picking scenarios. Testing only workloads where you win. The fix: test the workloads your customers actually run.

Stale comparisons. Benchmarking against a competitor’s version from two years ago. The fix: always test the latest release.

Ignoring setup complexity. Showing raw performance without accounting for configuration effort. As Martin Fowler points out in his writing on software metrics, a number without context is just noise.

Vanity metrics. Reporting peak throughput when your customers care about p99 latency. This connects directly to product analytics: measure what your users experience, not what makes your slides look good.

Why Benchmarking Matters for GTM

Benchmarks are not just technical documentation. They are a go-to-market strategy tool. When a prospect is comparing your product to an alternative, a well-designed benchmark gives your sales team a credible, data-backed answer.

Benchmarks serve different audiences: developers want raw numbers and reproducible tests; decision-makers want a summary and a story; analysts want independence and transparency.

How to Use With AI

1. The Methodology Reviewer

Before you publish, use AI to find weaknesses in your methodology.

Prompt: “You are a skeptical competitor who wants to discredit these benchmark results. List every methodological weakness you can find. Be specific about what is missing or could be challenged.”

2. The Results Narrator

Benchmark data needs to be translated into a story for non-technical audiences.

Prompt: “Summarize these benchmark results for a VP of Engineering who has five minutes. Focus on what matters for their decision. Highlight both strengths and areas where we trail.”

3. The Workload Designer

Identifying the right benchmark scenarios requires understanding customer usage patterns.

Prompt: “Based on these customer conversations, suggest 3-5 benchmark scenarios that would be most meaningful to our target buyers. For each, explain why it matters.”

Guardrail: AI can help you frame and communicate benchmarks, but it cannot validate your numbers. Every data point in a published benchmark must come from an actual test run.

Conclusion

Product benchmarking is a discipline, not a marketing exercise. The teams that do it well gain a lasting credibility advantage. The teams that cut corners lose trust in ways that are hard to recover from.

The hardest part is publishing results where you don’t win. But that honesty is exactly what makes benchmarks credible.

What do you think? Comments are gladly welcome.